Tesla FSD Missed Cars Directly Ahead in 9 Crashes—System “Went Blind and Didn’t Know It”

Raffi Krikorian had his hands on the wheel. As the former Uber autonomous vehicle safety chief, he spent two years teaching others how to step in when self-driving cars go off course. He did everything right, monitoring his Tesla’s Full Self-Driving system just as the manual instructs. His kids rode in the backseat. Without warning, the Model X yanked the steering wheel and slammed on the brakes. Krikorian reached for control in a split second. It was too late. The car collided with a concrete wall. The vehicle was totaled. Krikorian suffered a concussion and days of headaches. The system had seemed flawless for months until that moment.

Trained for Perfection, Faced with Failure

During Krikorian’s two years leading Uber’s autonomous vehicle programs, his team achieved zero injuries. He designed the safety playbook, understood every failure mode, and knew in theory that Full Self-Driving always needed a human in the loop. Tesla’s website even warns drivers not to get too comfortable. The reality can be different. As Krikorian later wrote, the system had “trained [him] to trust it almost as much as a human driver.” A smoke detector that almost always works will eventually convince people to stop expecting an alarm. That conditioning is intentional. After the crash, the insurance paperwork listed Krikorian’s name, not Tesla’s.

A Split Second in Houston

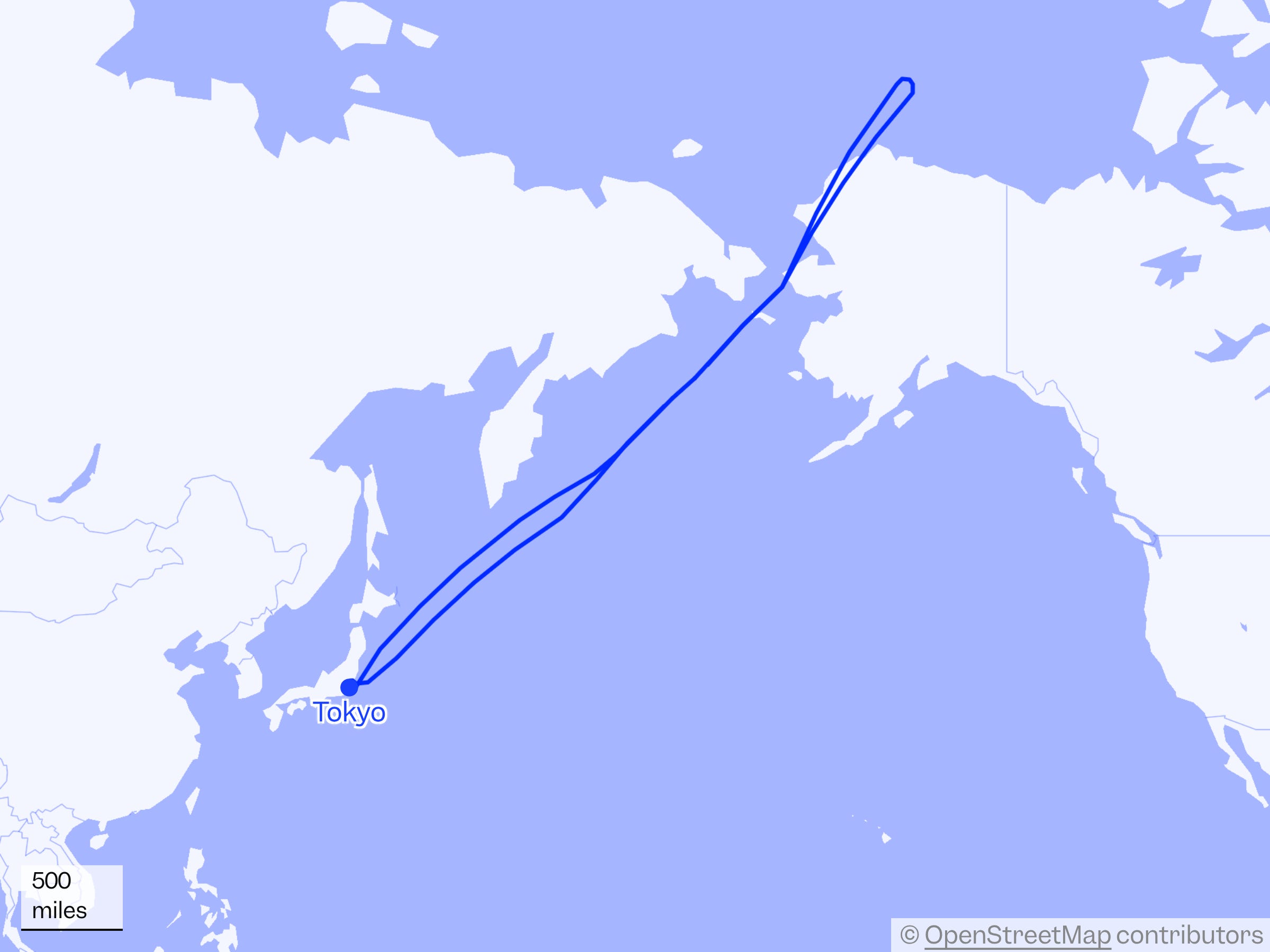

A few weeks earlier in Houston, Justine Saint Amour drove her Cybertruck with her one-year-old strapped into the backseat. As she reached a curve on the 69 Eastex Freeway overpass, dashcam footage captured the truck heading straight for the barrier instead of making the turn. Saint Amour disengaged FSD and grabbed the wheel. After the incident, Elon Musk posted on X that internal logs showed she “disengaged Autopilot four seconds before the crash.” Psychologists who study automation say most people need five to eight seconds just to mentally reengage, even though four seconds can appear to be enough.

When the System Can't See

The NHTSA’s Engineering Analysis, filed on March 19, 2026, found that Tesla’s FSD can fail when dealing with sun glare, fog, or dust. The cameras lose sight of what’s ahead, and the backup warning system sometimes misses these lapses. There have been nine crashes: one fatal, one with an injury, and six more under review. This investigation covers about 3.2 million vehicles, the largest of its kind in NHTSA history. In several cases, the cars lost track of or never detected another vehicle in their path before impact.

Seven Months, Three Lives Changed

The fatal FSD crash in reduced visibility happened on November 28, 2023. Tesla waited seven months to report it to NHTSA, filing on June 27, 2024. The following day, Tesla began working on a fix for the system’s ability to detect visibility problems. Even with the fix, the company’s own analysis said it might have prevented only three out of nine known crashes. Three out of nine. Meanwhile, peer-reviewed research showed that Waymo’s rider-only cars traveled 56.7 million miles and had a measurable drop in crashes and serious injuries compared to human drivers, with fewer intersection crashes.

Scrutiny on Every Front

NHTSA is running three separate investigations into Tesla. The main probe, about reduced visibility, involves 3.2 million cars. Another focuses on traffic violations and covers nearly 2.9 million vehicles after investigators found dozens of questionable incidents. The third concerns Tesla’s crash reporting practices. In California, a judge decided Tesla’s names for its driver-assist features, such as “Autopilot” and “Full Self-Driving,” were misleading and recommended suspending Tesla’s license for 30 days unless it changed its branding. The DMV put that penalty on hold, giving Tesla two months to update its names. The judge called Tesla’s “Full Self-Driving Capability” branding “actually, unambiguously false and counterfactual.”

Accountability in Court

In August 2025, a jury found Tesla partly responsible for a fatal 2019 Autopilot crash in Key Largo, Florida, that killed 22-year-old Naibel Benavides Leon. Tesla received 33% of the fault. The verdict required tens of millions in compensatory damages and $200 million in punitive damages, totaling about $243 million. Tesla had turned down a $60 million settlement offer before the trial. Afterward, the company settled several more Autopilot crash lawsuits before they could reach a jury. In one case, the plaintiffs hired a specialist to recover evidence from the car’s computer chip after Tesla said the data was missing.

Liability: Two Different Roads

In July 2025, BYD announced it would take full responsibility for crashes involving its Level-4 “God’s Eye” autonomous parking feature in China. If the system is at fault, the company pays for repairs, third-party damage, and personal injuries, no insurance claim required. A month earlier, Saint Amour’s Cybertruck nearly drove her and her baby toward an overpass barrier before she intervened. Tesla classifies FSD as Level 2. The driver is responsible if something goes wrong, not the company. BYD’s approach shows that broader liability-sharing is a policy choice. Tesla’s system still requires constant human supervision and places legal responsibility on the human driver.

Almost Perfect, Always Risky

Krikorian put it clearly: A machine that constantly fails keeps you sharp. A machine that works perfectly needs no oversight. But a machine that works almost perfectly—that’s where the danger lies.” Studies from the IIHS and other groups show that after a few weeks with partial automation, such as adaptive cruise control, drivers start looking at their phones or losing focus. The system encourages inattention, then demands instant reaction when something goes wrong. Most people need five to eight seconds to reengage, but crashes can happen in less than a second. NHTSA’s Engineering Analysis phase usually concludes within 18 months and often leads to a recall decision.

Millions Still at Risk

Each of those 3.2 million cars remains on the road, operating with the same camera-only system linked to nine reduced-visibility crashes. Tesla removed radar in 2021 and ultrasonic sensors by 2023, relying only on cameras. The Dawn Project ran FSD v13.2.9 past a stopped school bus with a child mannequin eight times in a row; each time, the system did not stop, did not alert the driver, and did not disengage. The system hit the mannequin in every test. Tesla’s legal team is appealing large verdicts and lobbying NHTSA about any potential recalls. The families driving those cars have no one to lobby for them. Sources:NHTSA – Engineering Analysis EA26-002 on Tesla “Full Self-Driving” reduced-visibility performance – March 19, 2026Electrek – “Tesla is one step away from having to recall FSD in NHTSA visibility investigation covering 3.2 million vehicles” – March 18, 2026The Atlantic – “My Tesla Was Driving Itself Perfectly—Until It Crashed” (by Raffi Krikorian) – March 16, 2026Electrek – “Former Uber self-driving chief crashes his Tesla on FSD, exposes the supervision problem” – March 16, 2026California DMV / Administrative Decision – “Tesla Takes Corrective Action to Avoid DMV Suspension” – February 17, 2026Insurance-Canada / BYD release – “China’s BYD takes on liability for its new Level-4 autonomous parking system, pledges to cover losses” – July 14, 2025

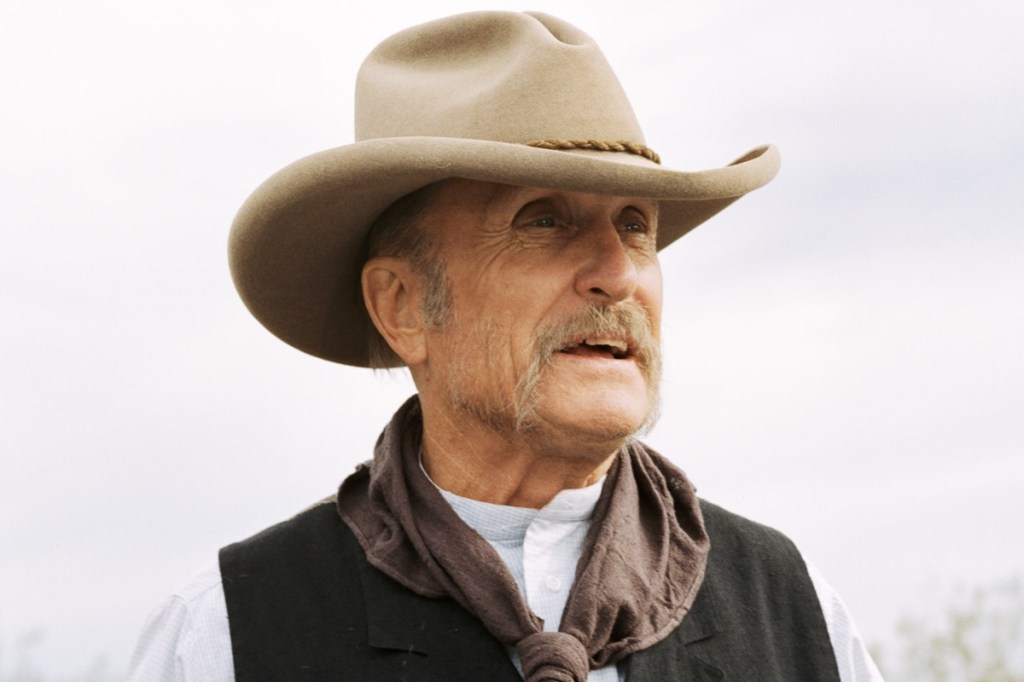

Start Your Engines with Bama Cooley

Like this story and hit Follow on MSN to get real driving experiences, powerful insights, and unfiltered takes delivered straight to your feed.